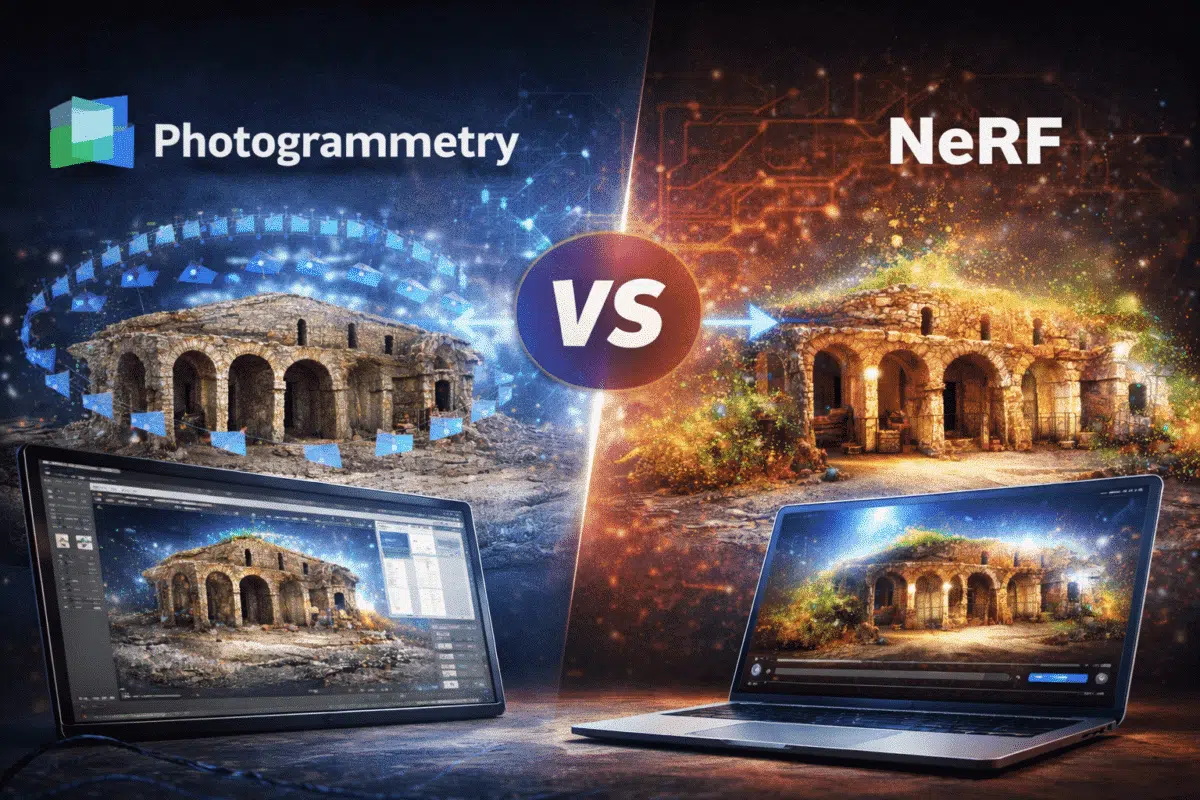

3D reconstruction technologies have evolved significantly over the past decade. Traditional photogrammetry software such as Agisoft Metashape has become a standard tool for generating accurate 3D models from photographs captured by drones, cameras, or smartphones.

Recently, a new technology called Neural Radiance Fields (NeRF) has gained attention within the computer vision and artificial intelligence communities. NeRF uses deep learning techniques to reconstruct 3D scenes and produce highly realistic renderings directly from images.

This development has raised an important question for professionals working in photogrammetry and 3D reconstruction: Could NeRF technology eventually replace traditional photogrammetry tools like Agisoft Metashape?

To answer this question, it is important to understand how NeRF works, how it differs from classical photogrammetry, and what advantages and limitations each approach offers.

What is Photogrammetry?

Photogrammetry is a well-established method for reconstructing three-dimensional objects and environments from overlapping photographs. The technique relies on identifying common features across multiple images and using geometric calculations to determine the spatial position of each point.

Software like Agisoft Metashape follows a typical photogrammetry workflow:

- Image alignment and camera calibration

- Sparse point cloud generation

- Dense point cloud reconstruction

- Mesh generation

- Texture mapping

This process produces accurate 3D models that can be measured and analyzed. Photogrammetry is widely used in industries such as surveying, construction, archaeology, mining, and drone mapping.

The key advantage of photogrammetry is its ability to produce metric models with reliable geometric accuracy.

What are Neural Radiance Fields (NeRF)?

Neural Radiance Fields represent a completely different approach to 3D scene reconstruction. Instead of reconstructing explicit geometry such as meshes or point clouds, NeRF models use neural networks to learn how light interacts with a scene.

A NeRF model is trained using a collection of images taken from different viewpoints. The neural network learns how each ray of light travels through space and predicts the color and density of points in a 3D environment.

Once trained, the model can generate new views of the scene from virtually any perspective.

In simple terms, NeRF reconstructs a scene as a continuous volumetric representation rather than a discrete geometric model.

This allows NeRF to produce extremely realistic renderings that capture subtle lighting effects and fine visual details.

How NeRF Works

The core idea behind Neural Radiance Fields is to represent a 3D scene using a neural network that maps spatial coordinates and viewing directions to color and density values.

The training process typically involves the following steps:

- Capture multiple images of the scene from different viewpoints

- Estimate camera positions

- Train a neural network to learn scene representation

- Render new views using volumetric rendering techniques

During training, the neural network learns how light interacts with the scene. This enables the model to synthesize new images with impressive realism.

However, this process can require significant computational resources, particularly during training.

Key Differences Between NeRF and Photogrammetry

Although both techniques rely on photographs as input, NeRF and photogrammetry use fundamentally different reconstruction strategies.

Photogrammetry focuses on generating explicit geometric structures such as point clouds and meshes. NeRF focuses on learning a continuous representation of the scene for rendering purposes.

The main differences include:

- Geometry representation: Photogrammetry produces meshes and point clouds, while NeRF represents scenes as volumetric neural fields.

- Accuracy: Photogrammetry can produce metric models suitable for measurements.

- Rendering quality: NeRF often produces more photorealistic visualizations.

- Processing workflow: Photogrammetry uses geometric algorithms, while NeRF relies on neural network training.

Because of these differences, the two approaches are often used for different purposes.

Advantages of Photogrammetry with Metashape

Agisoft Metashape remains one of the most reliable tools for professional 3D reconstruction workflows.

Some of its key advantages include:

- High geometric accuracy

- Support for georeferenced datasets

- Generation of orthomosaics and DEM models

- Compatibility with GIS and CAD systems

- Reliable mesh and texture outputs

These capabilities make Metashape essential for industries where accurate measurements and spatial analysis are required.

For example, surveyors and engineers rely on photogrammetry models to calculate distances, volumes, and terrain elevations.

Advantages of NeRF Technology

NeRF offers several advantages that make it attractive for visualization and immersive applications.

The most important benefits include:

- Extremely realistic rendering

- Accurate representation of lighting and reflections

- Ability to generate novel viewpoints

- Efficient representation of complex scenes

These features make NeRF particularly useful for virtual reality, cinematic production, and interactive environments.

Because NeRF models represent scenes as continuous volumes, they can capture subtle visual effects that traditional mesh models may struggle to reproduce.

Limitations of NeRF

Despite its impressive capabilities, NeRF still has several limitations when compared to photogrammetry.

The most important limitation is the lack of explicit geometry. Since NeRF models do not produce traditional meshes or point clouds, it can be difficult to perform measurements or engineering analysis.

Other limitations include:

- Long training times for large scenes

- High computational requirements

- Limited integration with surveying workflows

- Difficulty exporting geometry for CAD applications

For these reasons, NeRF is currently better suited for visualization tasks rather than precise modeling.

Hybrid Workflows: The Best of Both Worlds

Rather than replacing photogrammetry, NeRF may eventually complement existing reconstruction workflows.

A possible future approach could combine the strengths of both technologies:

- Photogrammetry for accurate geometry

- NeRF for photorealistic visualization

In such workflows, software like Metashape could generate precise 3D geometry, while NeRF-based systems could enhance rendering realism.

This combination could enable new applications in digital twins, immersive mapping, and interactive 3D environments.

The Future of AI in 3D Reconstruction

The emergence of technologies like NeRF demonstrates how artificial intelligence is transforming the field of 3D reconstruction.

Research in neural rendering is progressing rapidly, and new approaches such as Gaussian Splatting are already improving rendering speed and efficiency.

However, photogrammetry remains a mature and reliable technology with decades of development behind it.

For professional applications requiring accuracy and reproducibility, tools like Agisoft Metashape are likely to remain essential for many years.

Conclusion

Neural Radiance Fields represent an exciting new direction in the world of 3D reconstruction and computer graphics. Their ability to generate highly realistic renderings from photographs demonstrates the growing impact of artificial intelligence in this field.

However, NeRF does not yet replace traditional photogrammetry tools like Agisoft Metashape. Photogrammetry continues to provide the accurate geometric data required for surveying, mapping, and engineering applications.

Rather than competing directly, these technologies may evolve together, combining AI-driven rendering techniques with the geometric precision of photogrammetry.

Understanding the strengths and limitations of both approaches will help professionals choose the best tools for their specific projects in the rapidly evolving world of 3D reconstruction.